Thus, the need for a system to manage the data that requires little to no maintenance. Since the group is focused on physics research, other people that come after I leave may not have the necessary skills for maintaining the server. On the other hand, I am a post-doc and may need to leave the group at any moment in favor of a permanent position. Good side about this approach is the ease of use, however there is currently a performance penalty because of how Git works internally.

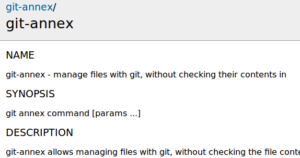

We do not deal with personal information and leaks or data loss would have only minor consequences. Git LFS uses filter based approach meaning that you only need to specify the tracked files with one command, and it handles rest of it invisibly. However, the data stored does not contain sensitive information. Ideally, I should be able to even read the data from within the server with the service running, since we may do some data analysis inside this computer.Ĭomment about point 2: I know not performing regular updates may leave security holes. it will not be compacted in a binary blob where you need the server running to retrieve it. You can also use Git LFS with GitHub Desktop. If you exceed the limit of 5 GB, any new files added to the repository will be rejected silently by Git LFS. Different maximum size limits for Git LFS apply depending on your GitHub plan. In the case it breaks, will the data be stored in a way someone can easily retrieve, i.e. When you clone the repository down, GitHub uses the pointer file as a map to go and find the large file for you.setup once and, as long no one updates the OS, it should not break (see comment below). If I must deploy GitLab's CE, how hard is to maintain such a server? My ideal scenario is a zero maintenance, i.e.Is that the only way? Ideally, I would like to be able to tell GitLab that our files are stored elsewhere, and the git repo just point out that "elsewhere" is our storage server?.The solution seems to be for us to deploy the community edition of GitLab on our storage server. However, this seems that it will upload the files to GitLab's server, defeating the whole purpose of a storage computer (also, we would need to buy storage from them). So, it would make sense to create a "data" repository where most of the files will be binary ones, stored and tracked via lfs. And apart from that: git-annex has an equivalent approach for storing large-files and is even more flexible as git-lfs. We already use GitLab (the cloud version) to version control our codes and easily share them between us. When researching the best way to do this, I came across git lfs. As such, I needed to think the best way to organize the data we will be generating. I am part of a small physics research team (10-15 people) which recently has acquired a storage server and I will be responsible for setting it up.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed